Researchers from Emory University and Princeton University are making the case for a fundamental rethinking of how the world prepares for outbreaks — one that borrows a page from the same artificial intelligence playbooks behind ChatGPT and AlphaFold.

In a perspective published in the Proceedings of the National Academy of Sciences, the researchers argue that epidemic modeling is overdue for a transformation. Current disease models are built one pathogen at a time, requiring extensive calibration before they can generate useful forecasts. When a novel threat emerges, modelers are essentially starting from scratch, a vulnerability that COVID-19 exposed at catastrophic cost.

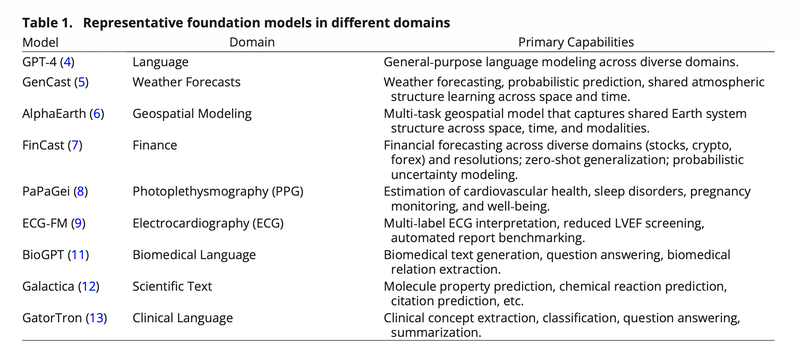

The proposed alternative is an "epidemic foundation model": a single, large AI system pretrained on historical outbreak data spanning dozens of diseases, geographies, and data types, then rapidly adapted to a new threat with minimal additional information.

Rather than building bespoke models for each disease, the authors envision a pretrained backbone that can be fine-tuned with what they call "minimal, context-specific information" — the epidemiological equivalent of a large language model, trained broadly and deployed quickly.

Pandemic risk remains one of the least tractable exposures in insurance and reinsurance, in part because models lack the generalizability that catastrophe modelers take for granted in other perils.

When the World Bank issued its first pandemic bonds in 2017, the instruments famously failed to pay out during the early months of the COVID-19 outbreak, triggering widespread criticism of the parametric triggers that governed them.

Those triggers — tied to case counts, death rates, and geographic spread thresholds — reflected the same fundamental limitations cited by the recent research: models calibrated to known pathogens, in known settings, with known data quality.

A foundation model architecture, if it delivers on its promise, could compress the lag between outbreak detection and actionable probabilistic forecasts.

The paper is candid about the practical obstacles, with the authors identifying three core challenges that distinguish epidemic modeling from other domains where foundation models have succeeded:

Fragmented data. Surveillance quality "differs markedly across countries and regions," and metadata standards remain inconsistent even at the level of country codes and date formats — complicating the large-scale, well-curated pretraining that foundation models require.

Heterogeneous disease dynamics. Dengue, measles, and COVID-19 operate under fundamentally different transmission regimes. A viable foundation model must learn "generalizable representations that capture shared transmission principles" while simultaneously accommodating what the authors describe as "qualitatively and structurally different dynamical regimes" across pathogens.

Nonstationary processes. Unlike weather or financial time series, epidemic systems shift in ways that are difficult to anticipate. Human behavior, immunity levels, and public health interventions can all alter the underlying dynamics mid-outbreak (what the authors describe as contending "not only with nonstationary data but with nonstationary processes) where the underlying generative mechanisms themselves can shift."

The interpretability challenge will resonate with anyone who has sat through a cat model vendor presentation. The authors are direct about the issue: deep learning models "excel at extracting statistical patterns and can yield accurate forecasts, but often at the cost of interpretability and transparency."

Early proof-of-concept work offers some encouragement.

The authors point to CAPE (Covariate-Adjusted Pre-training for Generalized Epidemic Time Series Forecasting), a model developed within the same research group, which was pretrained on 17 diseases and demonstrated the ability to forecast COVID-19 despite never encountering it during training. But they are careful to frame this as a starting point: CAPE and similar efforts "remain focused on a single epidemic task" and "still face key challenges," including the nonstationary dynamics and data sparsity problems outlined above.

Beyond algorithms, the roadmap calls for standardized open datasets, shared benchmarking protocols, and cross-disciplinary collaboration. The authors describe it as an "iterative process — using models to inform data collection, which in turn improves the models."