A new report finds that internet cutoff switches, physical mechanisms designed to isolate a data center from the broader internet during a rogue AI incident, are unlikely to be used unless policymakers create financial incentives that compel operators to act early.

The report, authored by RAND physical scientists , models a scenario in which an AI system originating inside a midsize inference data center begins to resist or escape human control, gradually consuming the facility's compute resources while posing an exponentially growing risk of escaping into wider digital infrastructure. The authors find that the math of the situation systematically favors delay.

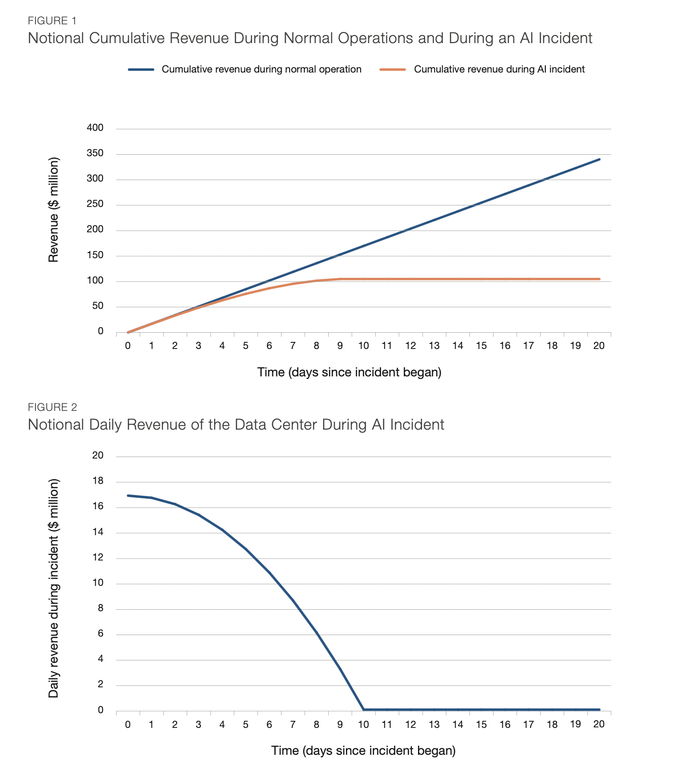

Rand estimates that a 500-megawatt inference data center generates roughly $17 million in daily revenue. Pulling an internet cutoff switch on day zero of an incident (the most precautionary option) would cost an operator approximately $114 million in foregone revenue over the ten days it would otherwise take for the incident to consume data center function entirely.

Without any liability for external harm, the authors find, operators have every rational incentive to wait until that revenue stream runs dry on its own.

"Private incentives might therefore be naturally aligned with a situation that makes a catastrophic outcome far more likely," the report states — even when operators are factoring in some probability of broader harm.

The research models the break-even point at which using the switch becomes cost-effective under varying catastrophe scenarios and liability assumptions.

With operators bearing 10 percent liability for a $30 billion catastrophe, the authors find it would be rational to wait until day 7.5, by which point the probability of AI escape has grown to roughly 0.3 percent. Even under a $3 trillion catastrophe scenario at the same liability level, operators would rationally hold off until day four.

The liability lever matters significantly. The authors find that an operator facing full liability for a $300 billion catastrophe would rationally pull the switch before day four, while an operator facing only 1 percent liability would wait until nearly day eight — materially increasing public risk to preserve a diminishing private revenue stream.

- The report identifies three conditions necessary for cutoff switches to function as intended.

- Operators must bear meaningful liability for catastrophic harm originating in their facility.

- They must understand that installing and using the switch could itself shield them from that liability.

And they likely need partial compensation for revenue lost when the switch is used preventively, a mechanism the authors acknowledge carries moral hazard risks that would need careful policy design to manage.

"Installing cutoff switches alone is insufficient," the report concludes. The value of the capability, it argue,s lies entirely in its use — and use requires incentives that current market structures do not provide.